Every week there’s a new “best AI coding tools for founders” thread on X. Cursor fans fight Copilot loyalists. Claude devotees call everything else autocomplete with extra steps. Windsurf users quietly ship in the corner.

Here’s the thing: they’re all arguing the wrong question.

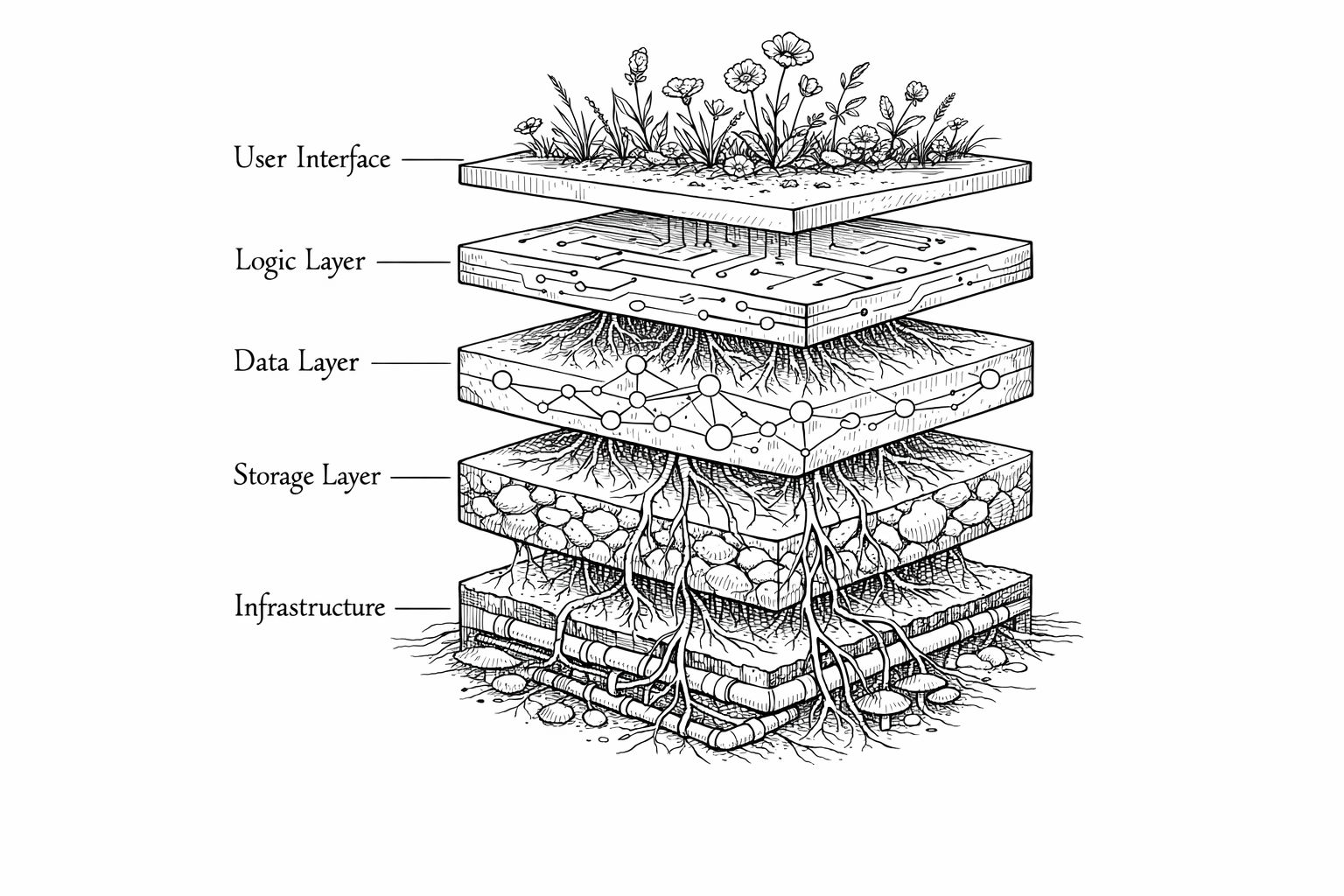

The question isn’t “which AI tool is best.” It’s “which AI tool is best at this specific stage of building my product.” Because Claude, Cursor, and Copilot aren’t competitors. They’re layers of the same workflow.

I’m going to walk you through the three stages of shipping a SaaS solo — architecture, active coding, and debugging/shipping — and tell you exactly which tool wins at each one. No “it depends.” No hedge words. Just the stack that actually works.

Why this post is different

We already have deep comparisons of Cursor vs Windsurf vs other editors. This post isn't organized by tool — it's organized by build stage. That's the framework that actually helps you decide what to pay for.

The Tool Debate Misses the Point

Most comparison posts throw three tools on a table, list their features side by side, and say “pick the one that fits your workflow.” That’s useless advice.

Building a SaaS solo isn’t one activity. It’s at least three:

- Architecture & planning — deciding what to build, how the pieces fit, what the data model looks like

- Active coding — writing features, wiring up APIs, building the UI

- Debugging & shipping — fixing the things that break, getting to production, staying there

Each stage has a different bottleneck. And each bottleneck has a different best tool.

The solo-founded startups taking off right now understand this intuitively. Solo founders went from 23.7% of new startups in 2019 to 36.3% by mid-2025. As YC partner Jared Friedman put it: “What used to take 5 engineers now takes 1 engineer with AI tools.”

But only if that one engineer picks the right tools for the right moments.

Stage 1: Architecture & Planning — Claude Wins, and It’s Not Close

Before you write a single line of code, you need to think. Database schema. API design. Auth flow. How multi-tenancy will work. What happens when a user upgrades their plan.

This is where most vibe coders skip ahead and pay for it later. (We covered the cost of that mistake in our piece on vibe coding mistakes that kill launches.)

Claude — specifically Claude with its 200K token context window — is the best thinking partner available for this stage. It’s not autocomplete. It’s a co-architect.

Here’s why:

- It can hold your entire spec in context. Feed it your PRD, your database schema, your existing codebase structure, and it reasons across all of it. Copilot can barely see beyond your current file.

- It pushes back. Ask Claude to review your architecture and it’ll flag problems you didn’t think of — race conditions in your webhook handler, missing RLS policies, edge cases in your billing logic.

- It generates actual artifacts. Not just suggestions — full database schemas, API route structures, migration files.

Roman Hauptvogel built a production SaaS (468 commits, 695 tests, 25 API endpoints) in 4 weeks using Claude Code. His key takeaway? Claude handled 54% of the total work — but the biggest ROI came from the planning stage, where it translated business rules into enforceable database constraints on the first try.

Architecture prompts that actually work

Don't say: "Build me an auth system."

Do say: "I'm building a multi-tenant SaaS with Supabase. Users belong to organizations. Each org has admin and member roles. I need Row Level Security policies that ensure users can only see data from their own org, and admins can manage team settings. Show me the schema, the RLS policies, and the edge cases I'm not thinking of."

The more specific and constrained your prompt, the better Claude performs as an architecture partner.

Stage 2: Active Coding — Cursor Is the Winner

Once you know what you’re building, you need to build it fast. This is the stage where your AI coding tool choice has the biggest impact on velocity.

The winner: Cursor. Here’s the honest breakdown of why — and what you lose by picking wrong.

Claude vs Cursor vs Copilot: Active Coding Comparison

| Feature | Cursor ($20/mo) | GitHub Copilot ($10/mo) | Windsurf ($15/mo) |

|---|

| Tab completion speed | Under 300ms | 400–600ms | ~350ms |

| Single-line accuracy | 70% | 60% | ~65% |

| Multi-file editing | Excellent (Composer + Agent) | Limited (single-file focus) | Good (Cascade Flows) |

| Codebase context | Full indexing + @-mentions | Current file + open tabs | Session-based learning |

| Multi-line accuracy | 50% | 35% | ~45% |

| Background agents | Up to 8 parallel agents | Not available | Parallel via Git worktrees |

| IDE lock-in | Cursor IDE only (VS Code fork) | 10+ IDEs | Windsurf IDE only |

| Best for | Production SaaS, large codebases | Quick edits, multi-IDE users | Greenfield projects, prototyping |

Why Cursor wins for solo founders

Codebase context is the killer feature. When you’re a solo founder, your codebase grows fast and you’re the only person who knows where anything is. Cursor indexes your entire project and lets you reference files with @-mentions. Ask it to “update the pricing page to match the new plan structure” and it knows where your pricing component, your Stripe integration, and your plan config all live.

Copilot doesn’t do this. It works great for single-file autocomplete — and at $10/month, it’s cheap. But it can’t coordinate a change across 5 files. For a solo founder building a real SaaS with dozens of interconnected files, that’s a dealbreaker.

A University of Chicago study found Cursor users had 39% more merged pull requests than competitors. Marc Lou — the indie hacker who’s shipped 12 SaaS products solo — switched from VS Code + Copilot to Cursor and said it “doubled his shipping speed overnight.”

When you’d pick something else

Copilot makes sense if you refuse to leave JetBrains or need multi-IDE support. It’s also half the price. For quick scripts, small edits, and hobby projects, it’s fine.

Windsurf has a genuinely unique feature in its Flow mode — it learns your coding patterns over about 48 hours and its suggestions get progressively more tailored. If you’re starting a brand new project from scratch, Windsurf’s autonomous Cascade agent is fast for greenfield work. But for production systems where you need control over every change, Cursor’s diff-and-approve workflow is safer.

For a deeper look at how these tools compare for building in public, check out our full vibe coding tools guide.

Stage 3: Debugging & Shipping — The Combo Play

Here’s where solo founders hit the wall. Your feature works locally. Then you deploy and everything breaks. CORS errors. Environment variable mismatches. That one edge case in your webhook handler that only shows up with real Stripe events.

No single tool dominates this stage. The fastest path to production is a combination:

Claude Code for agentic debugging. Claude Code runs in your terminal and can autonomously write-run-fix in loops. It scores 80% on first-attempt accuracy for agentic tasks — compared to Copilot’s 40% and Cursor’s 65%. When you have a bug that spans multiple files and requires tracing the logic through your whole stack, Claude Code can scan the codebase, identify the issue, write the fix, run the tests, and iterate. That’s the workflow that gets you from “it broke in production” to “fixed and deployed” in minutes instead of hours.

Cursor for surgical edits. Once Claude Code identifies the problem, Cursor is faster for making the precise, targeted fix — especially when you need to see the diff before it lands. Its Agent mode can also run terminal commands, but the real value is the visual feedback loop.

Evan D’Souza — a non-developer building a hotel management SaaS with 245 API routes and 719 source files — runs this exact workflow: Claude for reasoning through complex problems, Cursor for implementing the solutions. He went from zero coding experience to production software serving real users.

The counterpoint worth knowing

A study from METR found that AI tools actually increased task completion time by 19% for experienced developers on familiar codebases. The takeaway: AI tools give you the biggest speed boost on unfamiliar problems and new code. If you already know exactly how to fix something, just fix it. Don't prompt-engineer your way to a solution you could type in 30 seconds.

The Minimal Viable AI Stack: 2 Tools, $40/Month

If you could only use two tools to build and ship a SaaS solo, here’s what I’d pick:

Claude Pro ($20/month) + Cursor Pro ($20/month)

That’s it. $40/month total.

The $40/Month Solo Founder Dev Workflow

Step 1

Morning: Plan with Claude

Paste your current spec and today's goal. Ask Claude to break the feature into implementation steps, flag edge cases, and draft the database migration if needed. 15 minutes of planning saves 2 hours of debugging.

Step 2

Build in Cursor

Open Cursor with your codebase indexed. Use Agent mode for multi-file features. Use tab completion for everything else. Reference Claude's architecture plan with @-mentions to keep context tight.

Step 3

Debug with Claude Code

When things break (they will), drop into Claude Code in your terminal. Let it trace the issue across your codebase autonomously. Review its fix, apply it, run tests.

Step 4

Ship and repeat

Deploy to Vercel/Railway. If something breaks in production, Claude Code's agentic loop gets you to a fix faster than any other tool. Push the patch and move on.

Why not add Copilot too?

Because overlap costs you more than money — it costs you cognitive overhead. Cursor already uses Claude and GPT models under the hood. Adding Copilot means maintaining two autocomplete systems that sometimes conflict. Marc Lou runs just Cursor. The OPC Community recommends just Cursor + Claude Code. Keep it simple.

If you’re watching your budget, Copilot’s free tier (2,000 completions + 50 chats/month) is a decent starting point. But the moment you’re building full-time, Cursor Pro is worth every dollar.

For the full breakdown on picking your AI stack — including hosting, design, and automation tools — see our complete AI stack guide for SaaS.

The minimal viable AI stack for solo SaaS founders in 2026

| Stage | Best Tool | Monthly Cost | Why It Wins |

|---|

| Architecture & Planning | Claude Pro | $20 | 200K context, pushes back on bad ideas, generates full schemas |

| Active Coding | Cursor Pro | $20 | Codebase indexing, 300ms completions, multi-file Agent mode |

| Debugging & Shipping | Claude Code + Cursor | $0 extra (included) | 80% agentic accuracy + visual diff review |

| Total | — | $40/month | Covers all three stages without overlap |

Stop Comparing Tools. Start Shipping.

The founders actually making money with AI tools aren’t the ones endlessly debating Cursor vs Copilot on X. They’re the ones who picked a stack, learned it deeply, and started building.

The data is clear: Claude + Cursor covers architecture, coding, debugging, and shipping for $40/month. That’s less than a single freelance blog post used to cost.

What used to take a team of five now takes one founder with the right vibe coding workflow. The tools are there. The only question left is what you’re going to build with them.

Your code is handled. What about your content?

You've got the AI stack for building. But shipping a SaaS also means SEO, blog posts, and content marketing — and that's another time sink most founders ignore. Vibeblogger handles the entire blog operation automatically, from keyword research to published post.

See how it works